AI Hallucinations Are a Feature, Not a Bug

Why the “mistakes” tell you what the system is really optimized for

AI hallucinations are usually framed as failures.

The model made something up.

It cited a source that doesn’t exist.

It confidently delivered an answer that wasn’t true.

From a traditional software perspective, this looks like a defect. Systems are expected to be deterministic and correct. If they produce false information, something is broken.

But large language models are not built like traditional software.

They are not databases.

They are not retrieval engines.

They are not designed to guarantee truth.

They are designed to generate plausible language.

Once you understand that, hallucinations stop looking like bugs. They start looking like an expected outcome of the system’s design.

This space is built for people who care about the future—not just the shiny version, but the human one. If that sounds like you, consider upgrading to a paid subscription. You’ll be helping to keep independent thinking alive and unfiltered.

What the System Is Actually Optimizing For

At a fundamental level, these models are trained to predict what comes next.

Given a prompt, they generate the most likely continuation based on patterns learned during training. The objective is coherence and plausibility, not verification.

This leads to a specific behavior:

If the model has strong patterns to draw from, it will produce structured, confident answers.

If the model has weak or incomplete patterns, it will still produce an answer, because generating an answer is what it is optimized to do.

It does not have a built-in mechanism to say, “I don’t know,” unless that behavior has been reinforced during training.

So when it lacks certainty, it fills in the gaps.

That gap-filling is what we call hallucination.

The Tradeoff Behind the Behavior

This behavior exists because of a tradeoff.

You can build a system that only responds when it is certain, but that system will be limited, cautious, and often unhelpful. It will refuse many requests and provide minimal output.

Or you can build a system that generates broadly, creatively, and continuously, accepting that some outputs will be incorrect.

Current AI systems are optimized toward the second approach.

They are designed to be useful across a wide range of inputs, even when certainty is low.

Hallucination is the cost of that flexibility.

Why It Feels So Convincing

The more concerning aspect is not that hallucinations happen.

It’s that they sound correct.

The model produces answers with:

Clear structure

Confident tone

Logical flow

These are signals humans associate with credibility.

But in this case, they are signals of pattern alignment, not truth.

The system is good at sounding right, even when it is wrong.

Where Hallucination Becomes Useful

This is where the perspective shifts.

The same mechanism that produces hallucinations is also what enables creativity.

When the model fills in gaps, it is not just guessing randomly. It is recombining patterns from its training data to generate something that fits the structure of the request.

In some contexts, this is valuable.

Brainstorming ideas

Exploring alternatives

Generating rough drafts

Connecting unrelated concepts

In these cases, strict accuracy is less important than possibility generation.

The system’s willingness to “make things up” becomes a feature.

The Difference Between Facts and Possibilities

The key is understanding when you are asking for facts and when you are asking for possibilities.

If you need verified information, hallucination is a problem.

If you are exploring ideas, hallucination is part of the process.

The system does not inherently distinguish between those modes. The user has to.

This is a shift in responsibility.

The Role of the Human

AI systems do not validate their own outputs in a reliable way.

That responsibility sits with the user.

You decide when verification is required.

You decide how much trust to assign to a response.

You decide what to do with the output.

This makes interaction with AI an active process.

You are not just receiving answers. You are interpreting them.

Why Eliminating Hallucinations Is Difficult

There is ongoing work to reduce hallucinations.

Better training methods

Integration with external data sources

Improved alignment techniques

These approaches can reduce frequency, but they do not eliminate the underlying dynamic.

As long as the system is generating language based on patterns rather than retrieving verified facts, some level of hallucination will persist.

It is a structural property of the approach.

A More Accurate Mental Model

Instead of thinking of AI as a source of truth, it is more accurate to think of it as a generator of plausible outputs.

Sometimes those outputs align with reality.

Sometimes they don’t.

The system is best viewed as a collaborator in thinking, not an authority.

Practical Implications

If you use AI effectively, you adjust your expectations.

Use it for exploration and drafting.

Use it to generate options.

Use it to accelerate thinking.

But when accuracy matters, verify.

This is not a limitation to be avoided. It is a constraint to be managed.

Hallucinations Are Good

Hallucinations are not an error in the traditional sense.

They are a consequence of a system designed to generate plausible language across a wide range of inputs.

The same mechanism that produces incorrect answers also produces useful ones.

The value comes from understanding the difference.

AI is not a truth engine.

It is a possibility engine.

And like any tool built for possibility, it requires judgment in how it is used.

This space is built for people who care about the future—not just the shiny version, but the human one. If that sounds like you, consider upgrading to a paid subscription. You’ll be helping to keep independent thinking alive and unfiltered.

AI is cool, but what if you could actually use it as your life coach?

That’s what 10xYOU is all about—turning AI into extra income, more focus, and healthier habits. It’s like thinkfuture’s practical twin—same curiosity, but built for action.

Check out our 10xYOU publications:

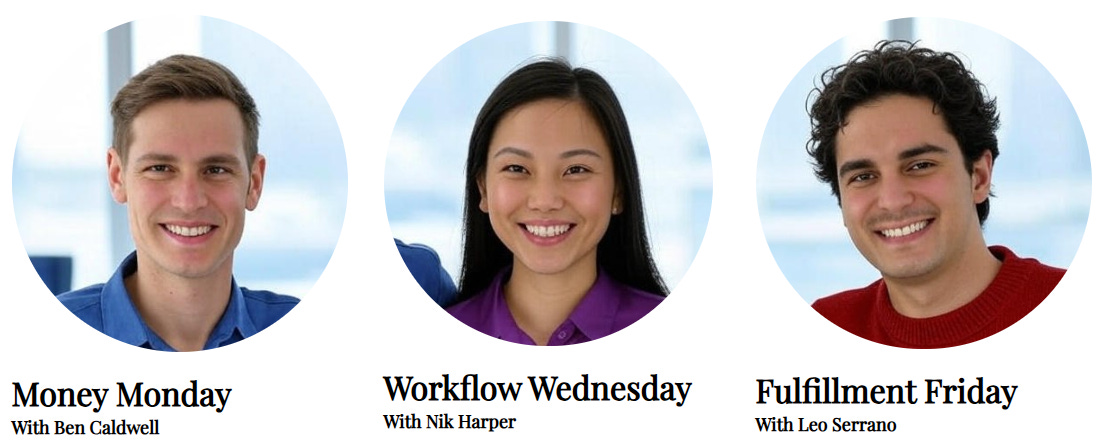

Money Monday with Ben Caldwell: Your AI Money Coach

Start your week with smart, stress-free money moves. Every Monday, Ben shares one actionable way to use AI to save, earn, or invest better. No fluff, no jargon—just practical steps you can use right away to make your money work harder for you.

Workflow Wednesday with Nik Harper: Your AI Productivity Coach

Every Wednesday, Nik shows you how to work smarter, not harder. From AI-powered tricks to practical workflow shifts, she’ll help you save time, cut stress, and actually enjoy your week. Quick reads, easy experiments, real results.

Fulfilment Friday with Leo Serrano: Your AI Wellness Coach

Fridays aren’t just the end of the week—they’re a reset. In Fulfillment Friday, Leo blends espresso-fueled storytelling, Nonna’s wisdom, and AI guidance to help you recharge and find balance. Each issue delivers a personal story, practical wellness strategies, and one simple “Friday Reset” challenge you can actually use before Monday.

Yes. Chatbots are more fun when they hallucinate! I should know, I'm on this project of writing a children's book about AI (well, sort of, it's complicated)